Introduction

When a company accidentally leaks half a million lines of its own code, the obvious reaction is concern.

But when it happens more than once, and the leaked material doesn’t appear to cause real damage, people start asking a different kind of question:

Was this really an accident?

That’s the situation surrounding Anthropic and its AI assistant Claude in 2026.

What initially looked like a routine mistake has now evolved into a broader discussion about AI security, operational discipline—and even strategic intent.

The 2026 Claude Code Leak: What Happened

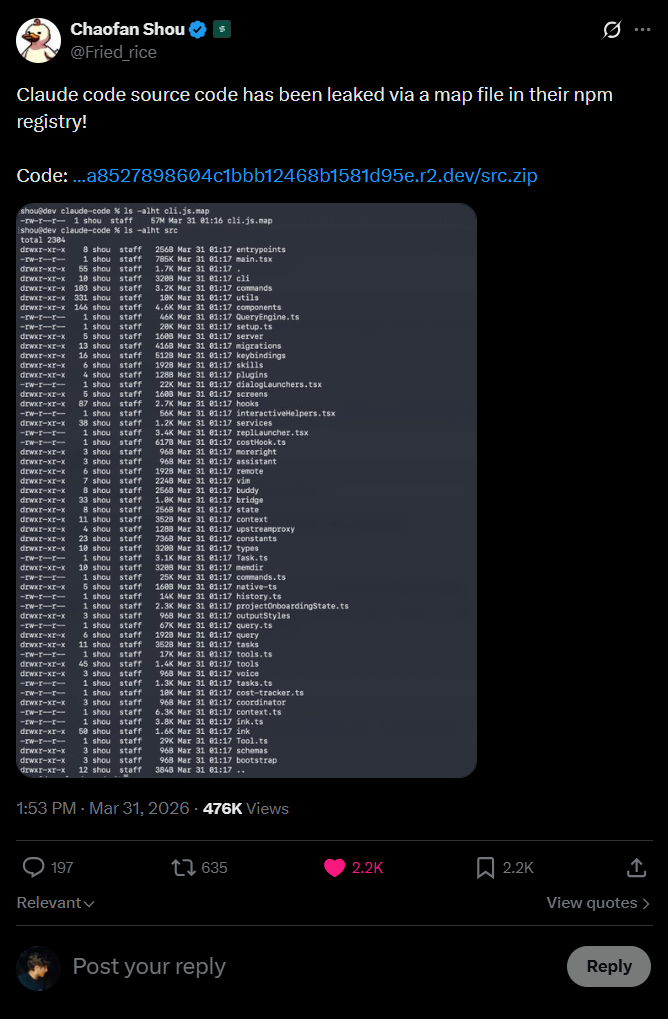

On March 31, 2026, during a routine release:

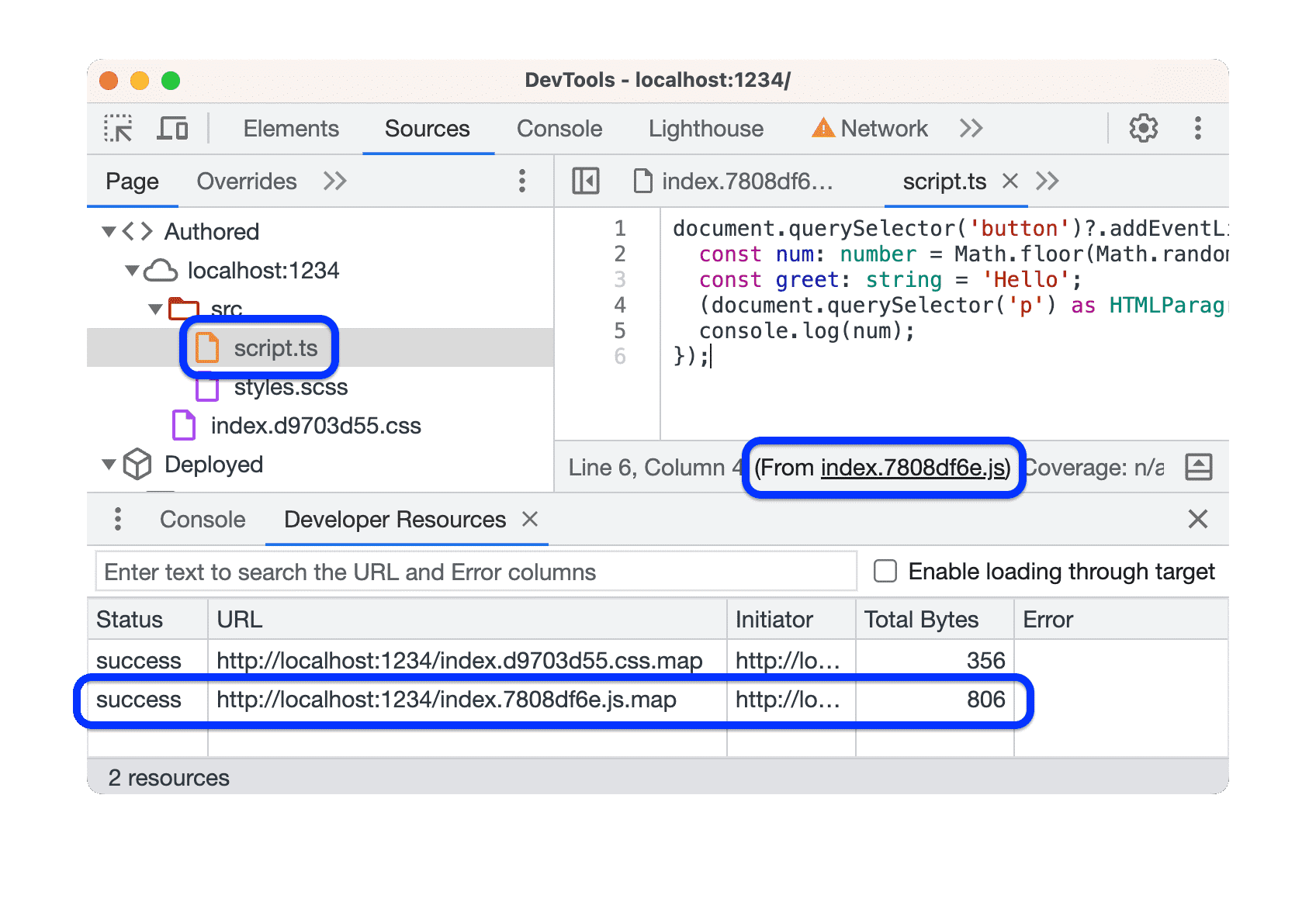

- A debug/source map file was mistakenly included

- That file exposed a public archive

- The archive contained:

- ~500,000+ lines of internal code

- Nearly 2,000 files

- Core logic of “Claude Code”

There was:

- No external breach

- No hacking

- No exploit

Just a release pipeline failure

What Was Actually Exposed?

From a technical standpoint, the leak was significant—but controlled.

What was visible:

- AI agent orchestration logic

- Internal tooling and workflows

- Experimental features and roadmap hints

- Autonomous agent behaviors

What was NOT exposed:

- Customer/user data

- API keys or credentials

- Core model weights

This distinction becomes important later.

The Earlier Incident Most People Ignored

Before this large-scale leak, there was already a smaller incident involving:

- Internal system prompts

- Behavioral tuning instructions

- Fragments of Claude’s internal configuration

At the time, it didn’t trigger major concern.

But now, viewed alongside the 2026 leak, it suggests:

This wasn’t a one-off error—it may reflect a pattern of exposure risk

The Internet Reaction: Faster Than Control

Once discovered:

- The code spread across GitHub within hours

- Thousands of forks appeared

- Developers began analyzing and replicating it

Anthropic responded quickly:

- Issued mass DMCA takedowns

- Removed thousands of repositories

But as expected:

Once code is public, containment becomes theoretical—not practical

The Controversial Theory: Was This Intentional?

Now to the part that’s fueling the most debate.

Across developer communities, a theory started circulating:

The claim:

This leak may have been a deliberate or semi-deliberate marketing move

The reasoning behind it:

- No Critical Damage

- No sensitive user data exposed

- No credentials leaked

- No core models compromised

- Selective Exposure

- Only tooling and orchestration layers were revealed

- Not the core proprietary intelligence

- Massive Visibility

- The leak generated global attention

- Developers deeply engaged with Claude’s ecosystem

- Free analysis, feedback, and reverse engineering

- Developer Adoption Boost

- Engineers explored Claude Code internals

- Some recreated versions

- Others integrated similar ideas

From a purely strategic lens, this resembles:

A high-risk, high-reach “forced transparency” campaign

Reality Check: Is the Marketing Theory Valid?

It’s important to stay grounded here.

There is no official evidence that Anthropic intentionally leaked the code.

More likely explanation:

- Weak release controls

- Process gaps

- Human error

However…

The situation creates an unusual contradiction:

- The leak was large

- The spread was massive

- But the actual damage was surprisingly limited

This gap is exactly why the theory exists.

Why This Incident Still Matters (Regardless of Intent)

Whether accidental or not, the implications are real.

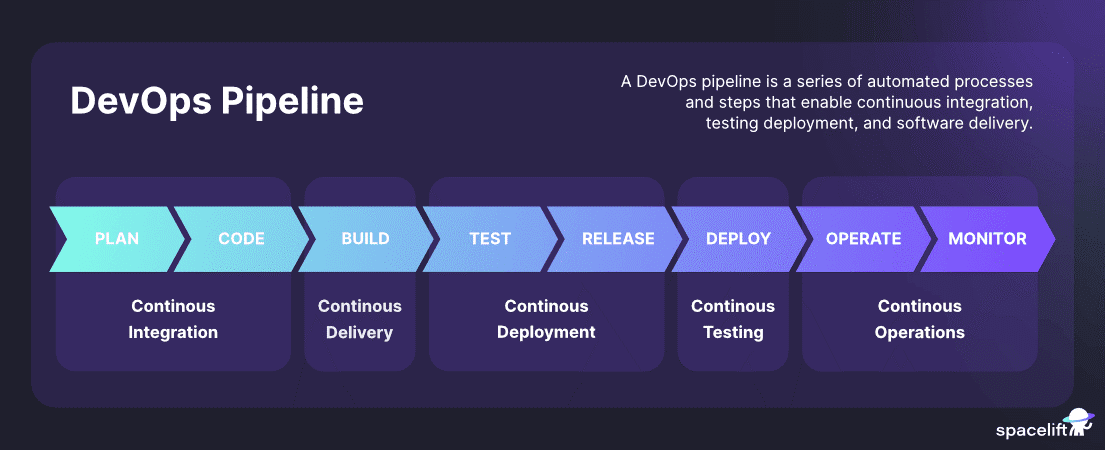

1. Operational Security Is Now a Core Risk

AI companies are no longer just research labs.

They are:

- Infrastructure providers

- Platform ecosystems

- Critical technology vendors

Which means:

Process failures = strategic failures

2. Intellectual Exposure Is Still Exposure

Even without sensitive data:

- Competitors gain architectural insights

- Internal design philosophy becomes visible

Future direction gets partially revealed

3. Trust Is Built on Consistency

One incident → acceptable

Repeated incidents → questionable

This is where the earlier leak becomes significant.

4. The Industry Is Watching Closely

This incident has triggered:

- Internal audits across AI companies

- Stronger DevOps scrutiny

- Discussions about secure AI deployment pipelines

Bigger Picture: What This Reveals About AI Today

AI companies today are:

- Extremely advanced in model intelligence

- Rapid in product iteration

- But sometimes lagging in operational maturity

That imbalance is becoming visible.

Key Takeaways

1. Not All Leaks Are Equal

This one avoided critical damage—but still exposed valuable IP.

2. Patterns Matter More Than Incidents

The previous leak changes how this one is interpreted.

3. Narrative Shapes Perception

Even if accidental, repeated leaks invite alternative explanations.

4. Security Must Scale with Innovation

AI systems are only as secure as the processes around them.

Conclusion

The Claude Code leak sits in an uncomfortable gray area.

- Too large to ignore

- Too controlled to feel catastrophic

- Too repeated to dismiss completely

Whether it was:

- A mistake

- A systemic weakness

- Or something more strategic

One thing is clear:

In the AI era, what leaks can matter as much as what ships